Linear dependence and independence form fundamental concepts in linear algebra that are pivotal for understanding more advanced mathematical theories and applications. To grasp these concepts fully, we need to dive into their definitions, implications, and practical applications across various fields.

Linear independence and dependence determine the nature of relationships among vectors within a vector space. These properties are critical for solving systems of linear equations, performing transformations, and analyzing data sets. Linear independence ensures that no vector in a set can be written as a linear combination of other vectors in the set, which guarantees that the set contributes uniquely to the vector space's dimensionality. Conversely, linear dependence implies that at least one vector can be expressed as a linear combination of others, indicating redundancy within the set.

Key Insights

- Linear independence confirms the unique contribution of each vector in a set.

- Understanding linear dependence aids in identifying redundancy in data sets.

- These concepts are essential for resolving systems of linear equations efficiently.

Understanding Linear Independence

Linear independence implies that a set of vectors cannot be expressed as linear combinations of each other. To elucidate, consider a set of vectors {v₁, v₂,..., vₙ}. If there are scalars c₁, c₂,..., cₙ, not all zero, such that c₁v₁ + c₂v₂ +... + cₙvₙ = 0, then these vectors are linearly dependent. Otherwise, if the only solution to this equation is c₁ = c₂ =... = cₙ = 0, the vectors are linearly independent. This property is crucial in many applications, including ensuring the integrity of basis vectors in vector spaces.

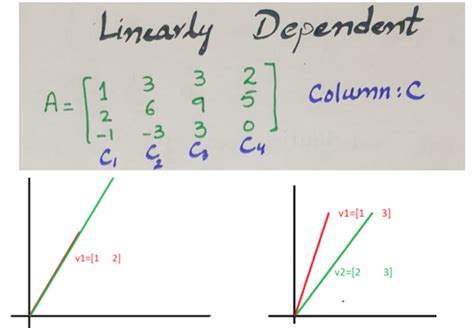

Unpacking Linear Dependence

Linear dependence reveals that there exists a relationship among vectors where one can be written as a linear combination of others. For example, if we have vectors v₁ and v₂ and it's possible to find scalars, not all zero, such that av₁ + bv₂ = 0, then v₁ and v₂ are dependent. Linear dependence can manifest in many practical scenarios, such as when analyzing data sets where redundancy or correlation might need to be minimized for optimal outcomes.

Real-world Implications

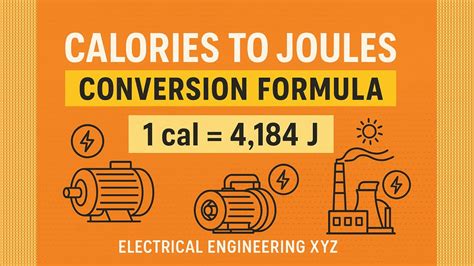

In practical scenarios, understanding linear dependence is crucial. For instance, in data analysis and machine learning, recognizing dependent features is key to improving model performance. Techniques like Principal Component Analysis (PCA) leverage linear independence to reduce dimensionality while retaining the most informative features. Similarly, in electrical engineering, the linear independence of signal vectors is fundamental for communication systems to ensure efficient transmission and reception of signals.

How do linear independence and dependence affect machine learning models?

Linear independence ensures that features contribute uniquely to the model, reducing redundancy. Linear dependence, however, indicates that some features are merely repetitions of others, which can lead to overfitting and poor model performance. Removing dependent features can enhance the efficiency and accuracy of machine learning models.

Can linear dependence impact the solutions to systems of linear equations?

Yes, linear dependence among the equations in a system implies redundancy, leading to either infinitely many solutions or no solution at all. This makes it imperative to identify and address linear dependence to find unique and valid solutions.

In conclusion, linear independence and dependence are not just mathematical abstractions but tools with wide-ranging practical significance. Understanding these concepts enables better management of data, more efficient problem-solving, and enhanced analytical capabilities across multiple disciplines.