The Gram Schmidt algorithm is an essential procedure in linear algebra for converting a set of vectors into an orthogonal set. This algorithm is widely used in numerous applications including signal processing, numerical analysis, and various machine learning techniques. By transforming a set of vectors into an orthogonal basis, this algorithm facilitates the simplification of many mathematical problems.

Core Concept of the Gram Schmidt Algorithm

The Gram Schmidt algorithm takes a linearly independent set of vectors and transforms them into a set of orthogonal vectors without altering their span. This process involves repeatedly subtracting the projection of each vector onto the space spanned by the previously computed orthogonal vectors. The result is an orthonormal set if each vector is additionally normalized.Key Insights

Key Insights

- Primary insight with practical relevance: The Gram Schmidt algorithm provides a systematic method to convert any linearly independent vectors into an orthonormal basis, which is crucial for applications like QR decomposition in matrix factorization.

- Technical consideration with clear application: The orthogonality preserved by the algorithm minimizes computational errors in numerical linear algebra operations, making it invaluable for numerical stability.

- Actionable recommendation: Implement the algorithm in software for tasks requiring orthonormal basis such as principal component analysis (PCA) in data science.

Steps and Mechanics of the Gram Schmidt Process

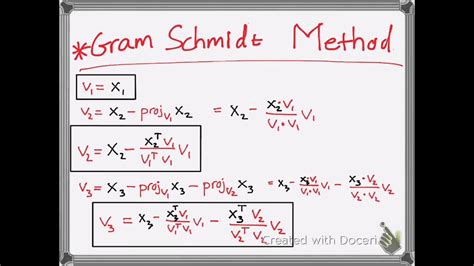

The core steps of the Gram Schmidt process involve iteratively defining new orthogonal vectors from the original set. Given a set of vectors {v_1, v_2,…, v_n}, the first vector u_1 is simply normalized v_1. Each subsequent vector u_i is derived by subtracting the projections of v_i onto the already computed orthogonal vectors u_1, u2,…, u{i-1}, and then normalizing the result. Mathematically, u_i = v_i - ∑ (proj_u_j(v_i)) * u_j, where proj_u_j(v_i) is the projection of v_i onto u_j.Applications of the Gram Schmidt Algorithm

The utility of the Gram Schmidt algorithm extends beyond theoretical constructs into practical realms. For instance, in numerical methods for differential equations, orthonormal bases simplify the solution space. In machine learning, orthonormal vectors are employed in algorithms like PCA, which reduces the dimensionality of datasets without significant loss of information. The Gram Schmidt process ensures that the reduced dimensions are uncorrelated, enhancing computational efficiency and accuracy.FAQ Section

Can Gram Schmidt Algorithm be applied to any set of vectors?

While the Gram Schmidt algorithm can technically be applied to any set of vectors, its effectiveness hinges on the initial set being linearly independent. If the vectors are linearly dependent, the algorithm will encounter division by zero scenarios when normalizing vectors.

What are the limitations of the Gram Schmidt algorithm?

The primary limitation lies in its numerical instability in finite precision arithmetic environments, where rounding errors can accumulate. Additionally, the algorithm’s computational cost increases with the square of the number of vectors, making it less efficient for very large datasets.

This article underscores the importance and practical utility of the Gram Schmidt algorithm in various scientific and engineering fields. The insights provided and the real-world applications highlight the necessity and efficiency of adopting this method in modern computational techniques.