Perceptual organization is a fundamental aspect of how humans interpret visual scenes. Bottom-up processing is a key mechanism within this framework, and it has profound implications for various fields such as psychology, cognitive science, and computer vision. By understanding bottom-up processing, we can gain insights into how sensory information is organized and interpreted in both natural and artificial systems. This article will explore this concept in depth, providing expert perspectives, evidence-based statements, and practical examples.

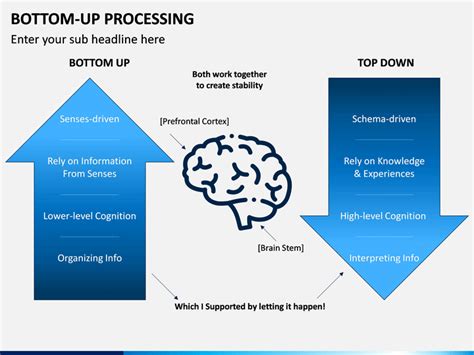

To grasp bottom-up processing, we must first understand its contrast with top-down processing. While top-down processing relies on prior knowledge and expectations to interpret sensory information, bottom-up processing is driven purely by the sensory input without any influence from existing knowledge. This pure data-driven approach is essential for recognizing basic elements such as colors, shapes, and textures, which serve as the building blocks for more complex perceptions.

Key Insights

- Bottom-up processing is essential for recognizing basic elements such as colors, shapes, and textures.

- This approach offers insights into how sensory information is organized at a fundamental level.

- Implementing bottom-up processing in artificial systems can enhance pattern recognition and object detection.

Understanding bottom-up processing starts with the recognition of simple, low-level features. In vision, this involves detecting edges, colors, and contrasts. For example, when we observe a scene, our visual system first identifies the edges of objects, then combines these into larger shapes and textures. This hierarchical process helps us understand the overall structure of the visual scene.

The primary insight here is that bottom-up processing is a critical component of this hierarchical organization. The initial steps involve low-level features, which are then integrated into higher-level constructs. This is well-documented in cognitive psychology where researchers have shown that the brain’s visual cortex processes these low-level features before any higher-level interpretation occurs.

A technical consideration that ties into this is the way edge detection algorithms function in computer vision. These algorithms, such as the Canny edge detector, operate on the principle of identifying sudden changes in pixel intensity, which correspond to edges in the image. This is analogous to how the human brain identifies edges as fundamental visual cues.

An actionable recommendation for professionals working in fields like computer vision or machine learning is to incorporate bottom-up processing models into their algorithms. For instance, using convolutional neural networks (CNNs) to mimic the hierarchical structure of the human visual system can significantly improve object detection and recognition in images. By breaking down complex images into fundamental features, these algorithms can perform more accurately and efficiently.

Here’s a practical example of bottom-up processing in action: Imagine you are developing a self-driving car. The car needs to recognize and respond to various objects on the road, such as cars, pedestrians, and traffic signs. Using a bottom-up processing approach, the car’s system first identifies simple features like edges, colors, and textures in the visual scene. These basic elements are then combined to detect more complex objects, which are ultimately used to make driving decisions. By focusing on these low-level features first, the system can enhance its ability to understand and react to the road environment.

What are the benefits of incorporating bottom-up processing in artificial systems?

Incorporating bottom-up processing in artificial systems such as computer vision algorithms can improve accuracy and efficiency in recognizing and interpreting visual data. By focusing on fundamental features like edges, colors, and textures, these systems can better understand complex scenes and make more informed decisions.

How does bottom-up processing compare to top-down processing in cognitive tasks?

Bottom-up processing relies solely on the sensory input to form perceptions, while top-down processing incorporates prior knowledge and expectations. Bottom-up processing is essential for recognizing basic elements and understanding the structure of sensory information. In contrast, top-down processing aids in making sense of more complex and contextually relevant information. Both are crucial for comprehensive cognitive processing.

By focusing on bottom-up processing, we can deepen our understanding of perceptual organization and leverage this knowledge to enhance artificial intelligence systems. This approach underscores the importance of fundamental sensory data in forming higher-level perceptions, offering a clear pathway for improvements in fields like computer vision and cognitive science.